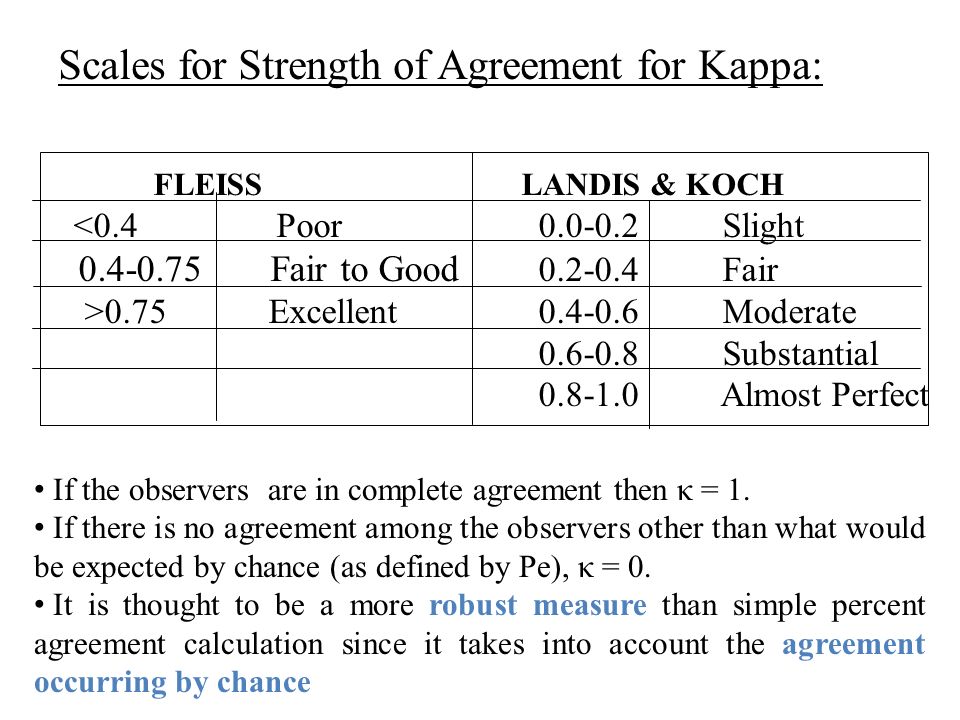

Inter-observer variation can be measured in any situation in which two or more independent observers are evaluating the same thing Kappa is intended to. - ppt download

The reliability of immunohistochemical analysis of the tumor microenvironment in follicular lymphoma: a validation study from the Lunenburg Lymphoma Biomarker Consortium | Haematologica

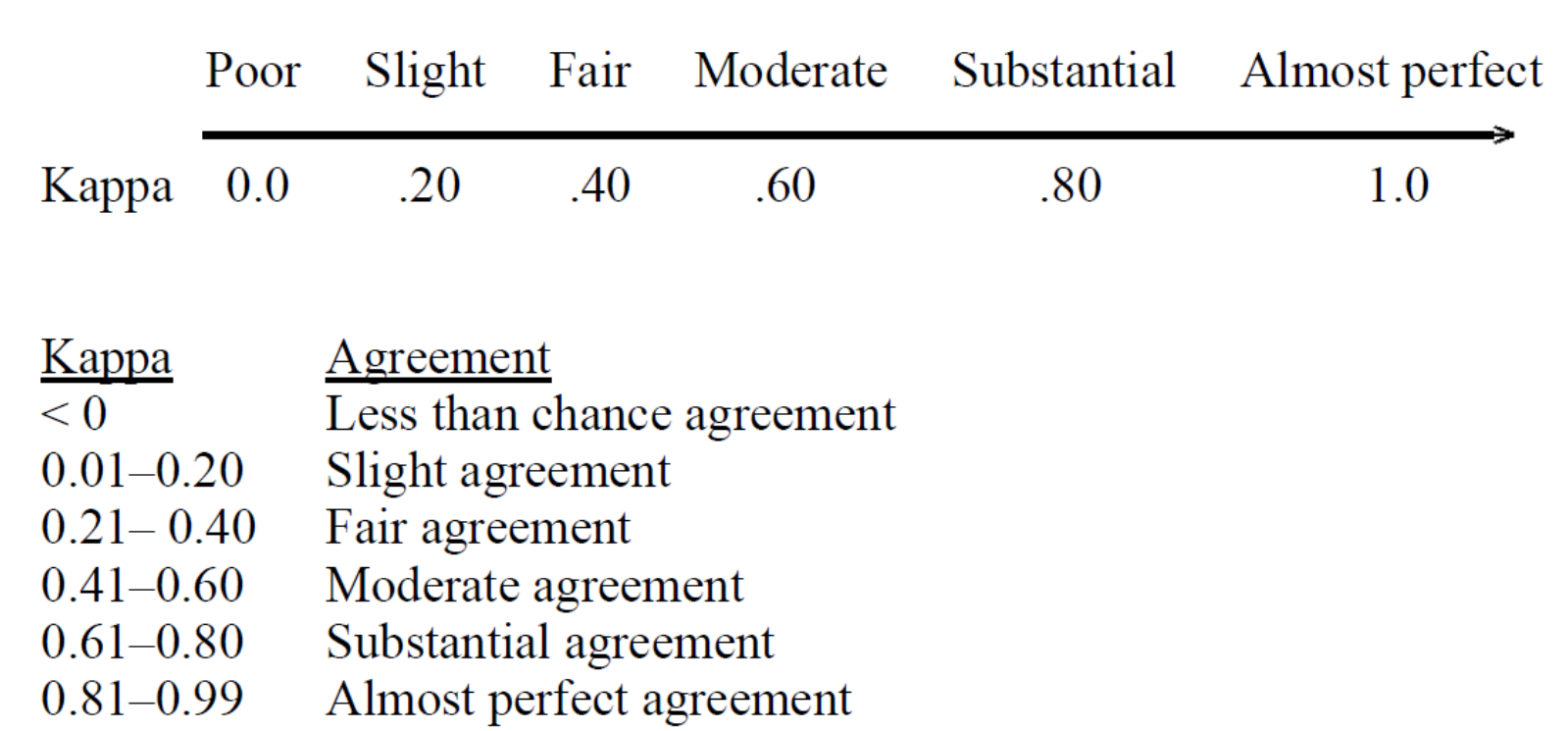

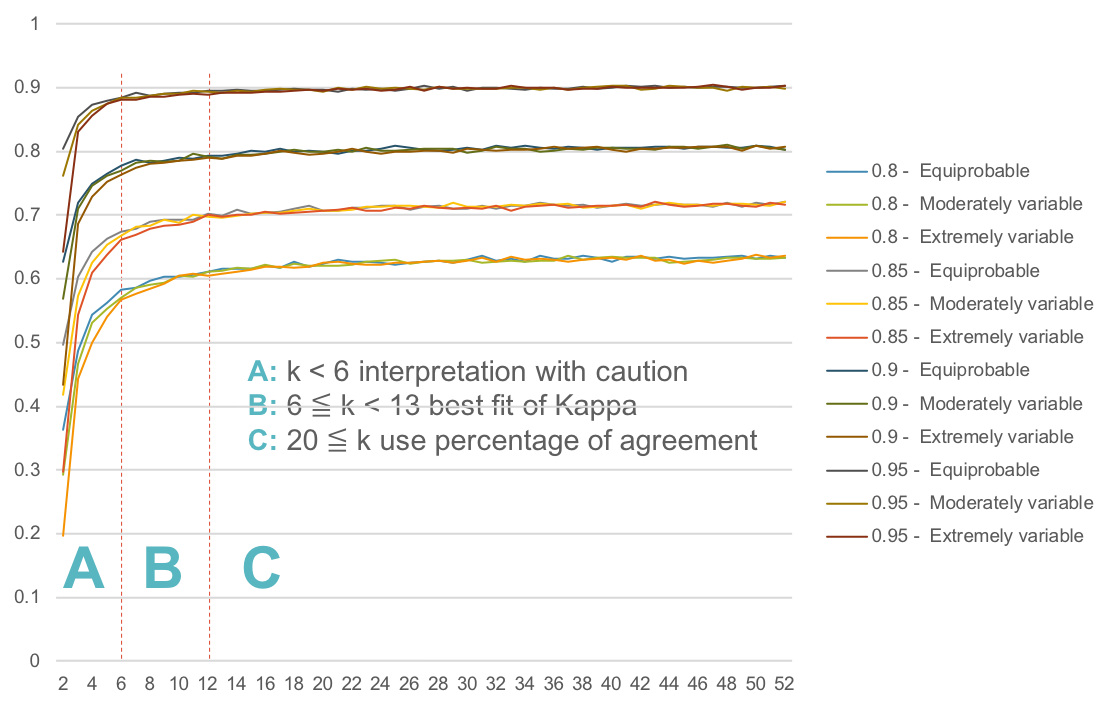

K. Gwet's Inter-Rater Reliability Blog : Benchmarking Agreement CoefficientsInter-rater reliability: Cohen kappa, Gwet AC1/AC2, Krippendorff Alpha

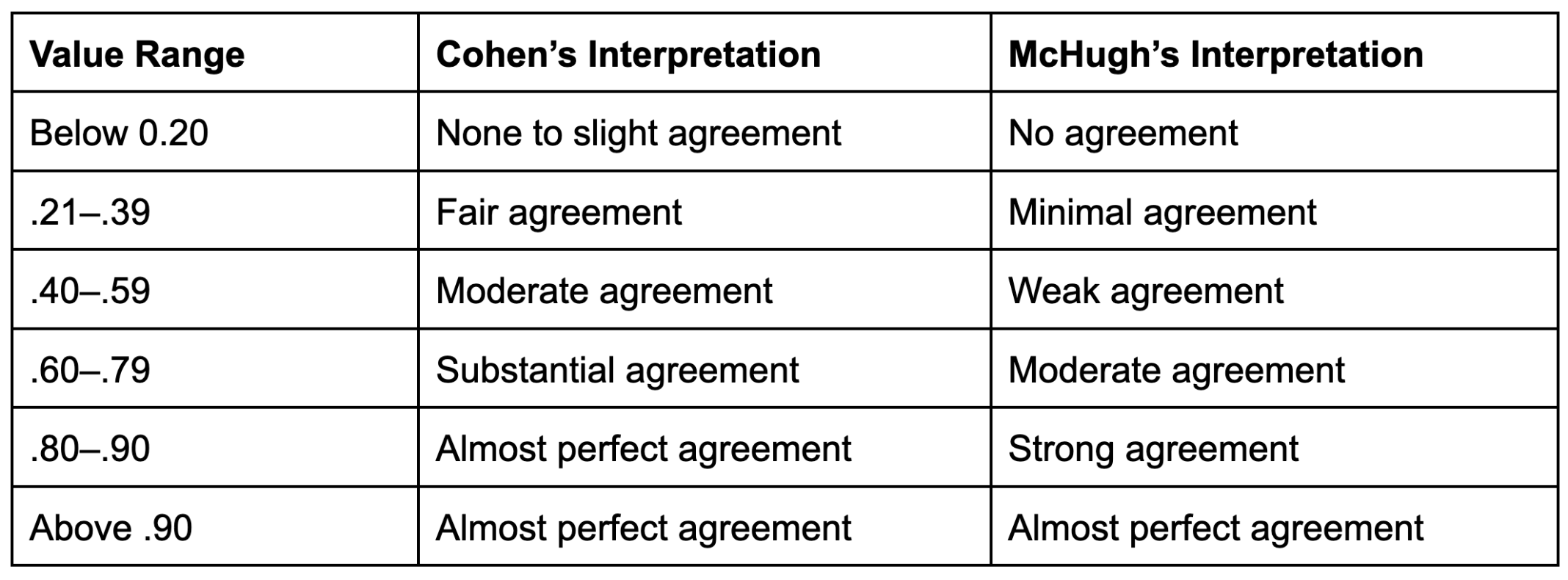

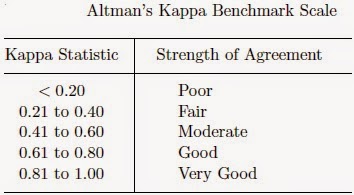

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

![PDF] A Simplified Cohen's Kappa for Use in Binary Classification Data Annotation Tasks | Semantic Scholar PDF] A Simplified Cohen's Kappa for Use in Binary Classification Data Annotation Tasks | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/0efde960b887a6daffffc34d4e729965c1c972ee/6-Table3-1.png)